Flutter Bootstrap 5

This package allows the use of Google Speech Api with grpc as a pure Dart implementation. With the support of grpc it is also possible to use the streaming transcription of the Google Speech Api with this package.

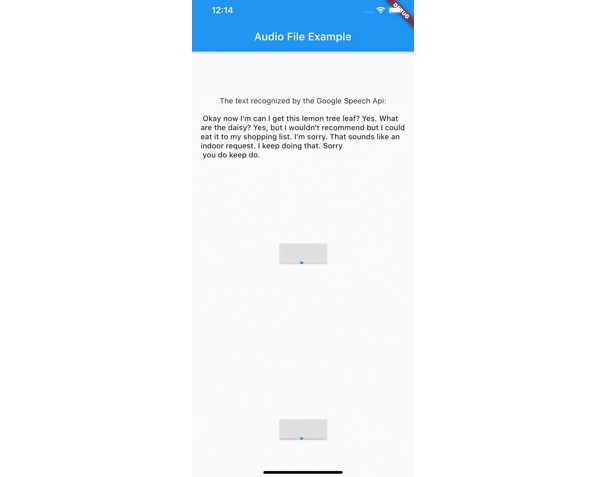

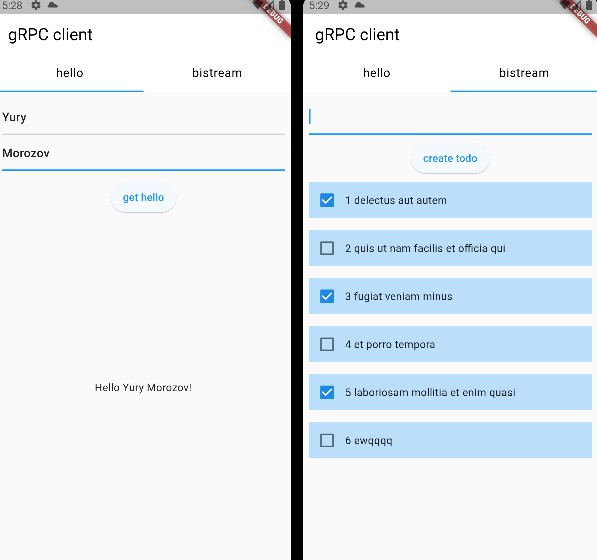

Demo recognize

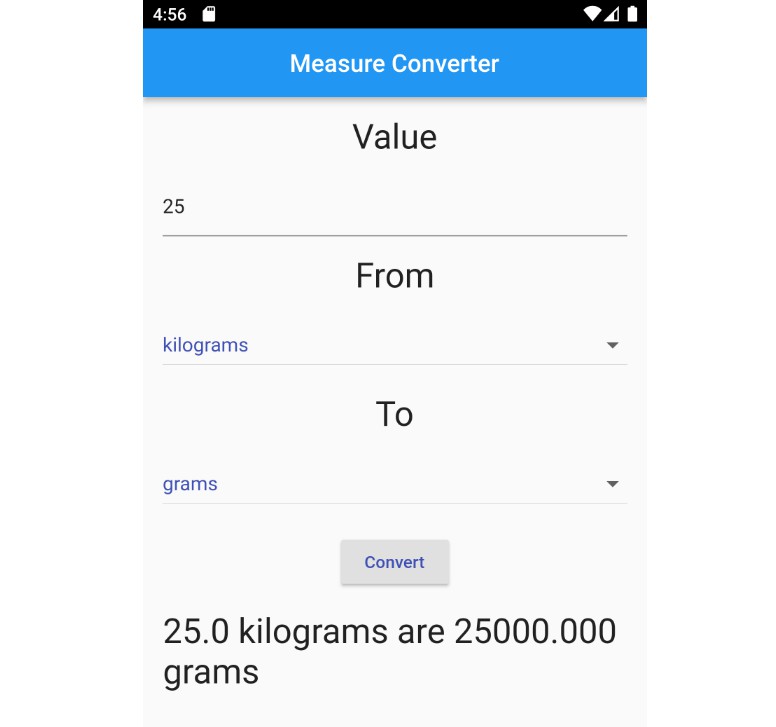

Demo Streaming

Getting Started

Authentication via a service account

There are two ways to log in using a service account. Option number one is the direct transfer of the Json file. Make sure that the file really exists in the path you passed and that the file has a .json extension.

import 'package:google_speech/speech_client_authenticator.dart';

final serviceAccount = ServiceAccount.fromFile(File('PATH_TO_FILE'));

Option number two is to pass the Json data directly as a string. This could be used for example to load the data from an external service first and not have to keep it directly in the app.

final serviceAccount = ServiceAccount.fromString(r'''{YOUR_JSON_STRING}''');

/// OR load the data from assets

final serviceAccount = ServiceAccount.fromString(

'${(await rootBundle.loadString('assets/test_service_account.json'))}');

After you have successfully connected the ServiceAccount, you can already start using the Api.

Initialize SpeechToText

import 'package:google_speech/google_speech.dart';

final speechToText = SpeechToText.viaServiceAccount(serviceAccount);

Transcribing a file using recognize

Define a RecognitionConfig

final config = RecognitionConfig(

encoding: AudioEncoding.LINEAR16,

model: RecognitionModel.basic,

enableAutomaticPunctuation: true,

sampleRateHertz: 16000,

languageCode: 'en-US');

Get the contents of the audio file

Future<List<int>> _getAudioContent(String name) async {

final directory = await getApplicationDocumentsDirectory();

final path = directory.path + '/$name';

return File(path).readAsBytesSync().toList();

}

final audio = await _getAudioContent('test.wav');

And finally send the request

final response = await speechToText.recognize(config, audio);

Transcribing a file using streamRecognize

Define a StreamingRecognitionConfig

final streamingConfig = StreamingRecognitionConfig(config: config, interimResults: true);

Get the contents of the audio file as stream || or get an audio stream directly from a microphone input

Future<Stream<List<int>>> _getAudioStream(String name) async {

final directory = await getApplicationDocumentsDirectory();

final path = directory.path + '/$name';

return File(path).openRead();

}

final audio = await _getAudioStream('test.wav');

And finally send the request

final responseStream = speechToText.streamingRecognize(streamingConfig, audio);

responseStream.listen((data) {

// listen for response

});

More information can be found in the official Google Cloud Speech documentation.

Getting empty response (Issue #25)

If it happens that google_speech returns an empty response, then this error is probably due to the recorded audio file.

You can find more information here https://cloud.google.com/speech-to-text/docs/troubleshooting#returns_an_empty_response

Use Google Speech Beta

Since version 1.1.0 google_speech also supports the use of features available in the Google Speech Beta Api. For this you just have to use SpeechToTextBeta instead of SpeechToText.

ROADMAP 2022

- Update streamingRecognize example

- Add error messages for Google Speech limitations

- Add Flutter Web Support

- Add longRunningRecognize support (Thanks to @spenceralbrecht)

- Add infinity stream support

- Add more tests